Mistral AI has recently made significant strides in artificial intelligence by introducing its first multimodal model, Pixtral 12B, which can process text and images.

The model comprises 12 billion parameters and features a dedicated vision encoder that supports image resolutions of up to 1024x1024 pixels. It is built on Mistral's existing text model, Nemo 12B. It is designed to process an arbitrary number of images with varying sizes, enhancing its flexibility in handling complex multimodal tasks.

Mistral has opted for an open-source approach by making Pixtral 12B available for download via platforms like Hugging Face and GitHub. This allows developers to test and fine-tune the model according to their needs, promoting wider adoption and experimentation within the AI community.

Accessing Pixtral 12B

<Download from Hugging Face or GitHub>

Pixtral 12B is available for download on Hugging Face and GitHub. Developers can retrieve the model files directly, allowing them to run tests on their own systems.The model is released under the Apache 2.0 license, which permits users to modify and distribute the software without restrictions.<Using Mistral’s Platforms>Mistral plans to make Pixtral 12B accessible through its platforms, Le Chat and Le Plateforme, which will provide API endpoints for developers to integrate the model into their applications.A web demonstration is expected to be available soon, allowing users to interact with the model directly via a chatbot interface.

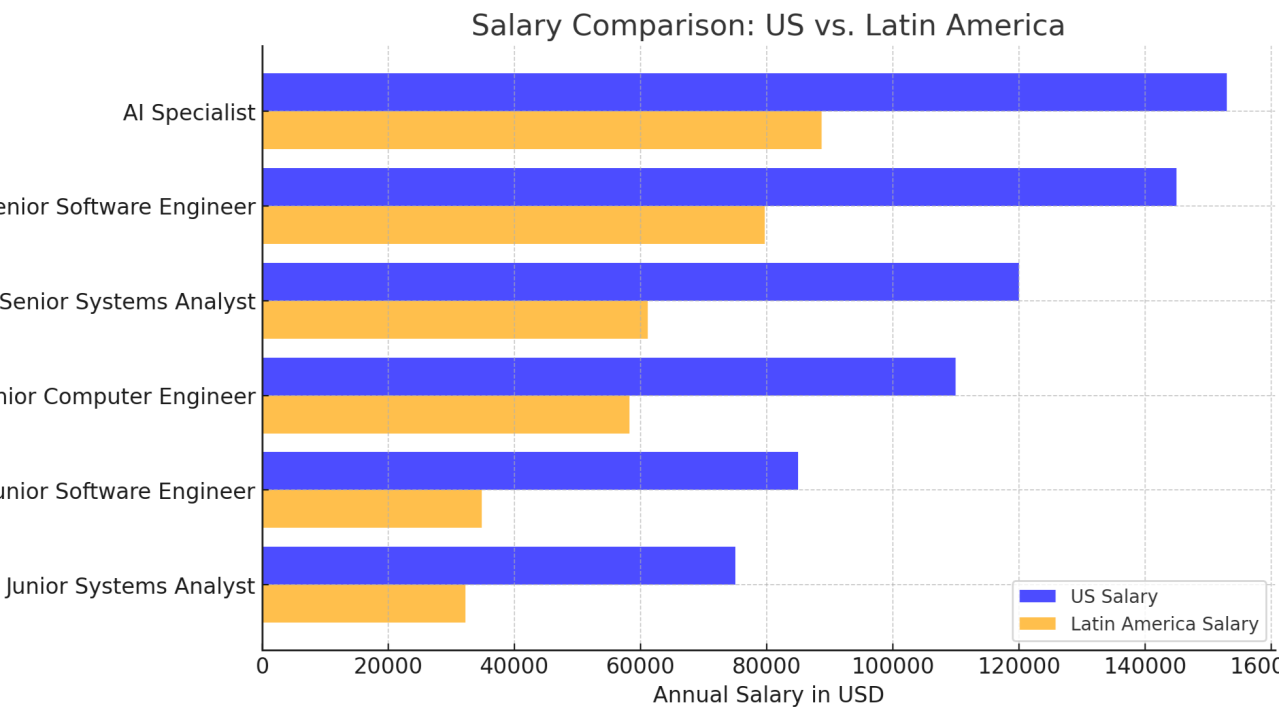

The shortage of technology professionals, including programmers, engineers, and analysts, has intensified in the United States due to the increasing demand for specialized skills and a limited supply of qualified talent.

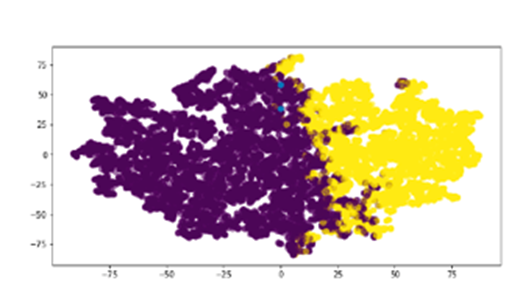

The need arise from the need to streamline the analysis of exams performed on patients who may present detectable pathologies through imaging studies, assisting the physician in making a faster and more accurate diagnosis.

Researchers at Sakana.AI, a Tokyo-based company, have worked on developing a large language model (LLM) designed specifically for scientific research.

Competing against ChatGPT Enterprise by OpenAI, now Anthropic released its own Claude for Enterprise.

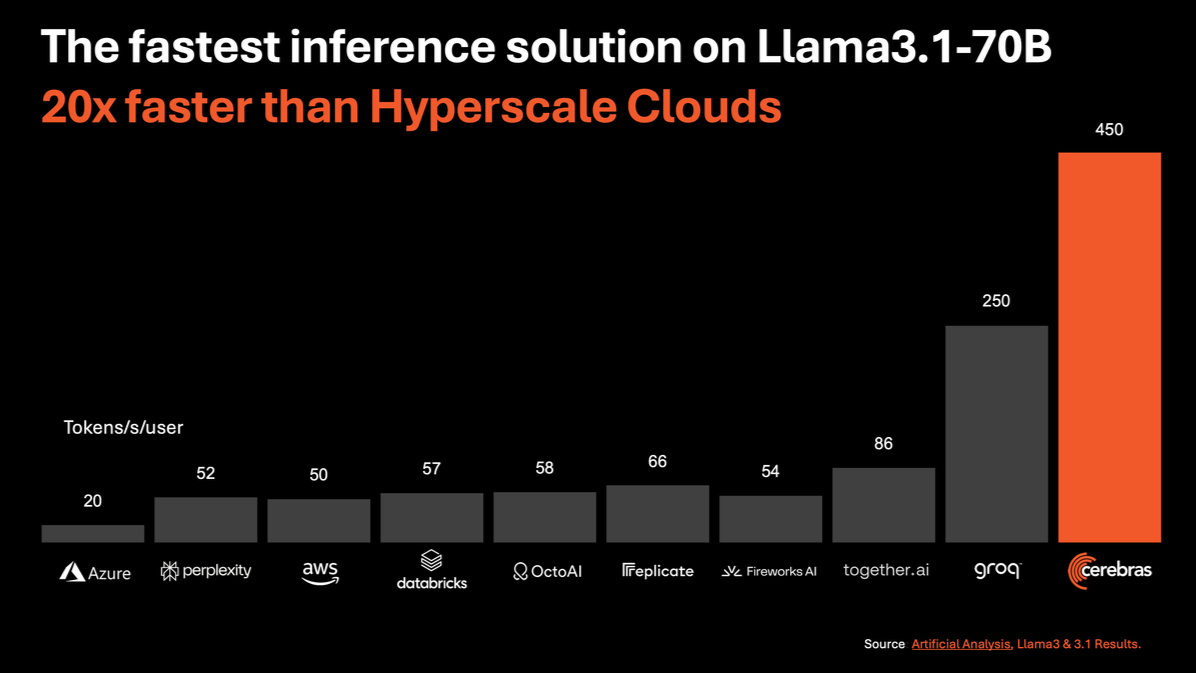

Cerebras Systems, known for its innovative Wafer Scale Engine (WSE), has received a mix of feedback regarding its processors, particularly compared to traditional GPUs like those from Nvidia.

FruitNeRF: Revolutionizing Fruit Counting with Neural Radiance FieldsHow can we accurately count different types of fruits in complex environments using 3D models derived from 2D images without requiring fruit-specific adjustments?